debugging

How to Run a 1.7B Parameter LLM in Your Browser With WebGPU

Learn how 1-bit quantized LLMs like Bonsai 1.7B fit in 290MB and run locally in your browser using WebGPU compute shaders.

webgpumachinelearningwebdev

Learn how 1-bit quantized LLMs like Bonsai 1.7B fit in 290MB and run locally in your browser using WebGPU compute shaders.

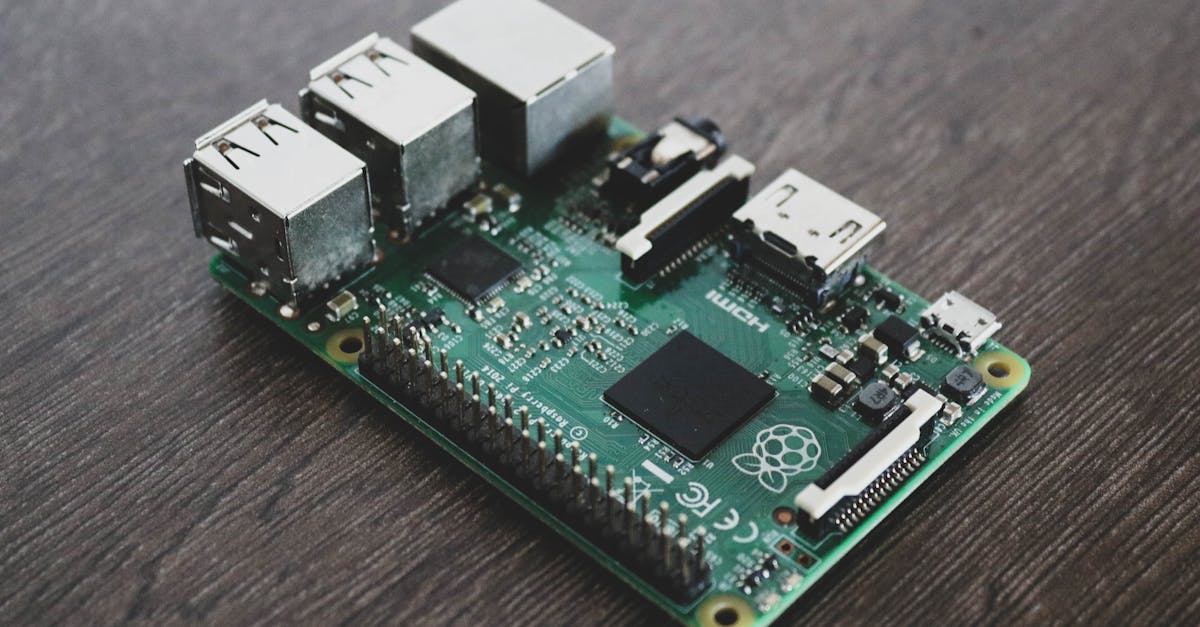

Hands-on guide to running Google's Gemma 4 E2B and E4B edge models on a Raspberry Pi and in the browser via WebGPU -- with real latency numbers, 128K context benchmarks, and honest comparisons to Phi-3-mini and other edge models.