comparison

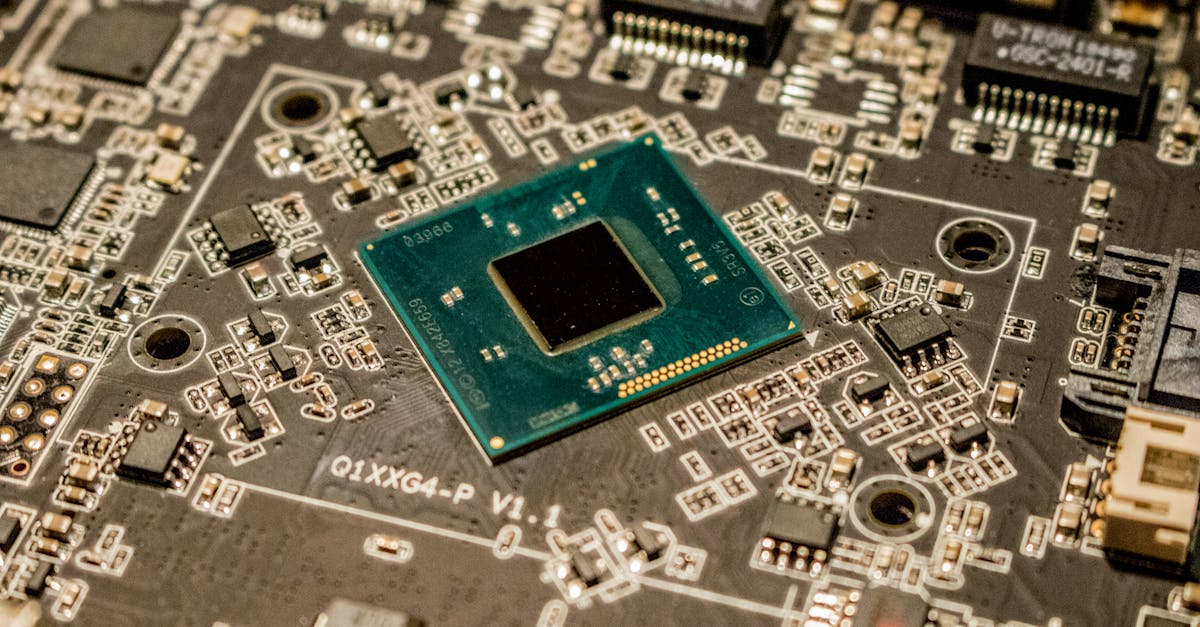

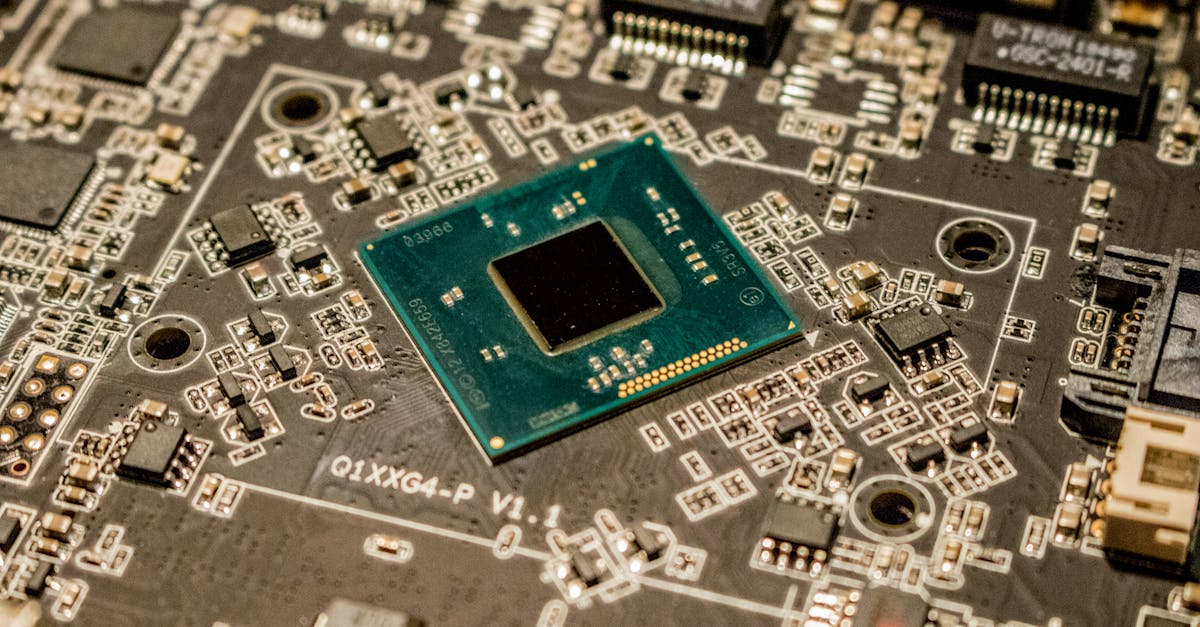

Qwen 3 vs Llama 3: Configuring Local LLMs for Actual Performance

Comparing Qwen 3 and Llama 3 for local inference — configuration tips, migration steps, and honest benchmarks from real-world testing.

llmqwenlocal-ai

Comparing Qwen 3 and Llama 3 for local inference — configuration tips, migration steps, and honest benchmarks from real-world testing.

Fix slow local LLM code completions with proper quantization, KV cache tuning, speculative decoding, and inference server configuration.